The Multiple Dimensions Of Abstraction Beyond Programming

From June 2024 to end of September 2024 I have written my Master Thesis by the title Abstraction as Problem-Solving tool, and its dimensions in Content Creation Engines beyond conventional programming.

At the time, I was juggling many side projects and not giving myself the space to fully digest my own findings and theory. With this article, I want to present my theoretical insights in a more concise and pragmatic form, highlighting their applicability and making the academic concepts more digestible through concrete examples.

I will conclude by illustrating these concepts in an extensive case study: a workflow designed for Virtual Production in Unreal Engine 5, demonstrating how abstraction can be used strategically to tackle large projects.

I won’t deny it—this article is long. But certain lines of thought required more elaboration and careful synthesis.

So, let’s begin.

A little bit of Background

Abstraction is most commonly seen as a pillar of object-oriented programming, often listed alongside Inheritance, Polymorphism, and Encapsulation. However, I believe that this strict segmentation misses the bigger picture. In fact, these four pillars are all facets of the same core principle: abstraction.

Let me explain.

Abstraction is the art of creating a simplified representation of a complex reality by intentionally ignoring irrelevant details. Viewed in this light, the connections become clear:

- Inheritance is an act of abstraction. It defines a fundamental, more abstract superclass from which specific, more concrete subclasses are derived.

- Polymorphism, or the use of interfaces, allows us to interact with an object while abstracting away its full complexity, focusing only on the features required for a given task.

- Encapsulation is achieved through abstraction. It hides internal implementation details, allowing us to focus on the essential, public-facing aspects of a system.

By viewing these concepts through the lens of abstraction, we can move beyond rigid preconceptions. Abstraction is not limited to object-oriented programming or even programming in general—it extends far beyond these boundaries. This article is an exploration of that idea, focusing on multiple examples to clarify the practical uses of abstraction and encouraging us to think more abstractly about abstraction itself.

Motivation

My motivation for this topic can be traced back to How I became a creative technologist and what role abstraction played in it. In short, just as I realized that the four pillars of object-oriented programming converge on abstraction, I found that most of my favorite activities, passions, and problem-solving approaches also converge on abstraction. This realization came while I was working on two projects side by side in 2024—one in Unity, the other in Unreal Engine 5—both with small teams and heavy use of the engine editors. My main thesis is to show how coding and working in the editor can be understood as forms of notation, metaphor, or abstraction to organize ideas and translate creative visions into reality. This was also an intrinsic goal I failed to articulate during my evaluation, only coming to realize it later as the essence of my research matured within me.

I was able to handle very complex scenarios by intentionally ignoring or postponing (from here on called deferring) decisions and implementation details, allowing me to focus on a presentable "whole" (as opposed to isolated features) that remained iterable. This approach helped me deal with unknown factors, time constraints, lack of manpower, and inexperience with some aspects of the system. I found this approach worth sharing, and I wanted to embed it in academic discourse. So I decided to first investigate and formulate a framework that allows for a wide range of applications of abstraction.

Goal

Formulate a general theoretical framework to express the uses of abstraction universally. This is basic research, not applied research. In other words, I believe that through this framework it is possible to verbally express effects related to abstraction across domains, and to frame these effects in dimensions relevant to art, design, creative processes, and programming—my main areas of interest. I will showcase examples from these fields throughout the article to support my points.

However, I am not claiming that the process described here is directly applicable to practical work. First, it lacks a targeted application as evidence for practical use and has not been researched with that goal in mind. So this would be an overreach of my presented evidence and is generally outside the scope of my initial intention. Nevertheless, I will demonstrate the framework in action to articulate what I came to realize about my personal approach.

Abstraction as a Tool

Abstraction is a conceptual activity that enables us to focus partially on any object with respect to a specific purpose or context. It is also a simplified representation that reflects and supports this selective attention. By abstracting, we generate more flexible objects that have certain known features (primary or distinguishing characteristics) which define the abstraction, while other aspects can be replaced, ignored, or left undefined.

- Generalizable and replaceable parts improve flexibility and reusability.

- Temporarily ignoring parts can decrease cognitive load and promote focus.

- Deliberately leaving pieces undefined encourages iterability and extensibility.

Common abstractions include patterns and interfaces, which help create boundaries between what must remain constant and what can change. This is the pragmatic value of abstraction. In fact, this definition alone already captures abstraction’s role as a tool—an aspect I somewhat neglected in my thesis, focusing more on the following sections.

But now to the core question: How does abstraction manifest?

Or, put differently: along which dimensions does this effect occur? My over-ambitious goal was to identify as many different applications and contextual uses for abstraction as possible, and then reframe all of these within a cohesive model, which I called “dimensions.”

What do I mean by dimensions?

Let me use an analogy to clarify what I mean by "dimensions." Think of three-dimensional space: we have the X, Y, and Z axes. For the sake of clarity, let's say the X-axis runs from left to right, the Y-axis from bottom to top, and the Z-axis from near to far. In this space, any object always has a value in all three dimensions, and these dimensions are independent of each other (unless a specific constraint or rule is applied).

I believe abstraction operates in a similar way. Each "dimension" of abstraction is an independent axis along which we can describe or analyze an idea, object, or system. Sometimes it's easier to talk about the extremes of a dimension; other times, it's more useful to name the axis itself. Importantly, these dimensions coexist and can be considered simultaneously—no single dimension overrides or determines the others.

Just as every object in physical space has a position along each axis, any abstraction can be described in terms of its position along each of these conceptual dimensions.

Dimension: Conceptual and Representational

When I first introduced abstraction, I emphasized that the activity of abstraction involves partial attention or, as an object, a partial representation. Let’s put this activity into practice.

This is an image of a chair—not just any chair, but this specific chair. Now, the question is: how do I know this is a chair? Is it simply the arrangement of brown pixels over a white background, such that any substantially different configuration of pixels would not be a chair? No, this is a bitmap of a chair: a digital rendering of pixels representing a photograph of a chair.

So, is a chair exactly what is shown in the photograph—a wooden plank with four legs and supporting beams? Absolutely not.

Ask yourself: Does a chair have to be made of wood? Does a chair have to have four legs? Not necessarily.

The concept of what you think is a chair is an abstraction of this concrete example.

I would define a chair as anything to sit on, that supports your hips as well as your back, usually fitting one person. Here, I tried to find the most general idea of what a chair is, in a functional sense, so that it qualifies as furniture.

If we were to abstract it even further—removing more features—we might end up with a definition such as something reserved for one person. This would facilitate metaphorical uses of “chair” when referring to positions in institutions, like chairs in parliament. We will explore the relationship between metaphorization and abstraction in the next dimension.

This example not only shows how abstraction enables communication and association, but also illustrates what I call the first dimension: an axis from the concrete and specific representation (the representational space) to the universal and conceptual space. From a rendered image to an idea. Now, let’s move from the idea back to the representation with a few examples.

Applicability

I will now showcase the application of abstraction using an already abstract concept: a shadow. What do I mean by 'abstract' concept in this context? As we have seen, something abstract is intentionally unspecified and can therefore be ambiguous, adopting different meanings in different contexts. It has many forms depending on how we use it—polymorphic, in a sense. Let us see just how powerful this is.

The word shadow itself is not a concept; it is just a word. Most people, however, will associate it with a concept. The more poetically minded might think of idioms such as the shadow side or he is but a shadow of himself, connecting the abstraction to other concepts through language. Meanwhile, the more visually minded may picture a shadow as something dark and looming. So let's give it an abstract definition: A region of absence of light. Notice how we bring simpler or related concepts into a relationship to define what a shadow means. By doing so, we focus on some defining features of shadows while ignoring others: region, absence, and light. Discussing shadow in this way makes analogical transfers easier. With one more conceptual abstraction, we could rephrase it in even more "abstract" terms: Anything that is modeled as a region defined by the absence of something can be used as a pattern for shadow and invoke the idea of a shadow.

This is also a technique used in biomimicry, where language functions as a vehicle for abstraction by translating domain-specific observations from nature into a neutral or abstract form, which can then be applied to derive technical solutions.

As a creative technologist, this becomes an important aspect of concepts as abstractions.

Artists could interpret this in different forms:

Poster designers, using the language of shapes and colors, could apply this principle to design a graphic composition where one region is pictorial and another is typographical, expressing the idea that "a picture says more than a thousand words"—making the picture overshadow spoken language.

Composers could model this idea with musical textures: one deeper melodic development dissolving and giving space for a higher one, exploring the concept with notes and motivic development. This is actually done in Shostakovich's Piano Concerto No. 2, 2nd Movement.

Game designers might interpret this concept through game mechanics and gameplay. For example, a game about discovering regions of a world that can only be reached in the absence of something else, or a game in which the player moves between two coexisting realities, with one reality missing something present in the other.

Notice how ideas paraphrased in terms of the abstract decomposition of a concept lay the foundation for new creative concepts.

As programmers, we can also use this idea. Suppose we already have a system capable of representing Light and Region. To represent shadows, we can use our abstract definition to bring existing concepts together—for example, by defining a Shadow in our program as a Region of zero Light, or perhaps even -Light. To generalize further, a Shadow<T> could be a shadow of any other object that requires this concept.

This demonstrates how abstraction enables flexibility and reusability from a purely conceptual perspective, even in programming. But when we talk about programming, we also need to consider two concepts that, in my theory, occupy the same position on this axis but are concerned with the abstraction of processes and actions: Intention and Implementation.

During my research, I encountered an interesting analogy between cognitive levels of action and computational levels of abstraction.

Marr defines the highest computational level as the behavior of a system—the most abstract, conceptual goal. Similarly, cognitive scientist Wurm describes the most abstract level of action as the intent—for example, the intention to open a door. These two theories converge in our role as system designers, where we aim to express an intent through system behavior.

Marr then moves to the algorithmic level: the step-by-step instructions the system follows to realize the behavior. In parallel, Wurm’s levels of abstraction progress to the movement level, where chronologically ordered changes in position (movements) are visualized and articulated to achieve the initial intent. The analogy becomes clear if we view the sequence of movements as an algorithm.

Finally, Marr discusses the implementation level: the system components, such as the programming language or the physical parts of a circuit board, which translate the algorithm into actionable steps. Wurm’s final level is muscle activation, where individual muscles contract or relax to execute the movement pattern.

(This progression—from a goal-oriented conceptualization to a concrete collection of components articulated through steps—also parallels my conceptualization of Strategy, Plans, and Tactics. However, exploring this further would exceed the scope of an already extensive article.)

In summary, we can align these two frameworks with our existing dimensions by defining Intent as the conceptual side of an action, and Implementation as the representational, more concrete realization of that intent.

Dimension: Logical and Intuitive

By allowing us to distance ourselves from an object in reality—deliberately ignoring features—we reduce our cognitive load and become more mentally agile. In this way, abstraction unburdens us from details and unlocks intuition. This is also what E. Sickafus1 claims when discussing heuristics: thinking tools that use abstraction by entering a vague language similar to biomimicry. Instead of providing new solutions, heuristics offer new approaches, reframing innovation as a fresh problem to be solved. While Sickafus relates this to the now-questionable theory of right- and left-brain hemispheres, I prefer to connect it to System 1 and System 2 from "Thinking, Fast and Slow"2. System 1 is the reflexive, fast, and intuitive mode of thinking—where we easily drift, find new connections, and follow different perspectives unburdened by details. System 2 is the analytical mode, carefully processing data and digging deep into experience; it is precise, effortful, and slower. Both are needed for effective problem-solving.

Consider the heuristic "do it in reverse." It is:

- Simple

- Lacks specifics

This qualifies as an "abstract" statement. Its value lies in not constraining the mind with problem specifics. Do implies modifying an action, it refers to the object of the problem, and in reverse suggests a counterintuitive direction or new perspective.

Just as abstraction allows us to move from concrete representation to conceptual ideas—existing in both conceptual and representational spaces—I argue that abstraction enables transitions between analytical and intuitive thinking modes. Abstraction itself has both a logical and an intuitive operational mode. Most sources focus on the logical mode: reducing cognitive effort, ignoring details, and finding simpler representations.

Therefore, in this section, I will focus more on the intuitive side. Let's begin with metaphorization. P. Arnoux and J. Soto‐Andrade have evaluated the use of metaphors to teach mathematical concepts, relating metaphors to abstraction3. They argue that we represent what we already know and use metaphors to connect different concepts. Metaphorization is a cognitive tool for constructing new concepts. In terms of our first dimension, we represent by moving from the conceptual space into the representational space, and we metaphorize by moving from representation to concept. This is a powerful use of abstraction, which will be showcased in our main example. For now, let's move on to abstraction and intuition in creative thinking.

In his paper "Universal Language of Thoughts? Abstraction and Creativity," Prof. Teodorescu describes the escalation of layers of awareness through abstraction for creative thinking.

- The paradigmatic level answers "What is that?"—the first abstraction layer above direct experience. Like the chair example, we shift focus from this specimen to the specimen as a generalization, freeing it from materiality and color. This level allows for aesthetic diversification.

- Raising abstraction further, the pragmatic level answers "What is that for?"—removing the pre-conceived object as a whole and abstracting it to its purpose. For example, defining a chair as "something for one person to sit on." This level is free of substantive terms; the abstraction is seminal and opens a highway for divergent thinking.

- At the etiological level, we ask "Why?"—stripping the object even of its pragmatic use and questioning fundamental assumptions. Beyond this is the axiological level, which transforms the search for purpose into an ethical one, but Teodorescu notes that at this point, creativity converges to a few archetypes. We will stop here.

This progression demonstrates the power of abstraction to enter different levels of awareness, enabling intuitive exploration. With each step up the ladder of abstraction, we loosen boundaries and free ourselves from the fundamental questions that keep us bound to lower, logical answers—thus expanding our solution space and innovative thinking paths. We free the "this" from empirical reality, the "a" to a "how," and the "how" to a "why."

Let's see this dimension in action!

Applicability

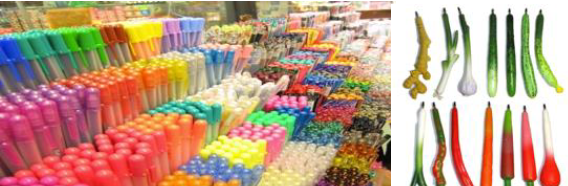

Borrowing from an example by Sickafus, I will show a creative process that constantly shifts between logic and intuition. In his example, he invents a new pen.

Starting with a generic pen, the first strategy is to generalize it to a writing implement to understand what it does or does not do. In abstract terms: it physically couples a user to a surface on which marks are to be made. This abstraction unlocks logical thinking paths, focusing on attributes like mass and shape. Continuing along the intuitive path, we might ask: What attributes at the point of contact are not currently active? No odor is emitted to activate smell, no flavor for taste, no sound for hearing, no vibration for touch, no light for sight. These are intuitive beginnings, followed by logical connections to the original concept. The brain wants—needs—to close the gap. We drift, then we weave: System 1 into System 2.

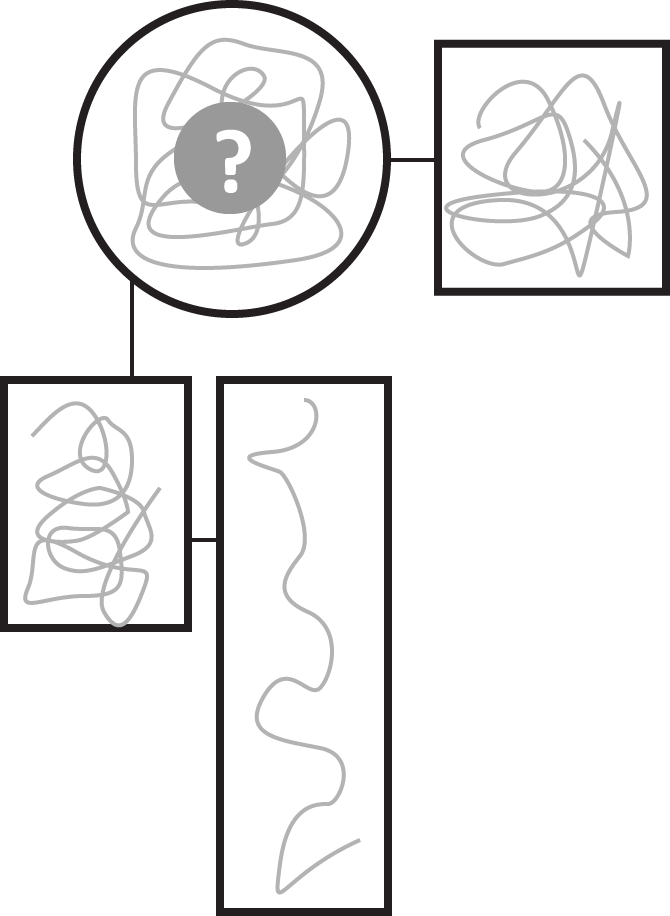

This demonstrates how abstraction enables us to shift between logical and intuitive spaces on this axis. Now, let's see how abstraction escalates through Teodorescu's levels of awareness, drifting away from the constraints of logical reality—again, using a pen.

Imagine you are tasked with inventing a new pen. You observe the pen before you. At first, nothing seems wrong. Only by abstracting the pen do you enter a mindset that allows you to replace features and get creative.

Similar to the chair example, let's abstract to the paradigmatic level and strip away features. It is no longer this pen, but a pen. As long as it remains a pen, we can do whatever we want—change its color, shape, or material.

I used images from the paper because the visual/iconographic approach shows the vast solution space already available at this level of abstraction, though we are still limited to visual modifications—superficial redesigns. For the next levels, we enter a purely conceptual realm and can omit images.

At the pragmatic level, we ask: What is it for? This transforms our pen into an abstraction free of iconographic connotation: a device to make signs. Now, our abstraction allows us to reframe the object in terms of other concepts. What is a sign? How can a pen make that kind of sign? What must we modify to enable the pen to make these signs? These are logical follow-up questions to root the abstraction back in reality.

At the etiological level, we ask: Why make signs? We might answer: something that records thoughts and experiences. Here, we have stripped away the mechanism to reveal intent, opening up even more possibilities.

What if we record the experience of drawing itself? Returning to logical space, we solve this problem. From the etiological level, we consider other objects that record experiences—like disks, which engrave grooves that can be read and played back. Those grooves are created by pressure and are signs on a physical medium.

So, what if we make a pen with a loose tip that records the pressure at every moment? While drawing, it records every change in pressure, which can later be read from a digital chip in the pen and transferred to a digital reader or program. We can transform that experience into a sequence of normalized values between 0 and 1 (yet another abstract representation), which becomes input to any system that accepts a temporal stream of such values (another abstraction).

For example, a generative music system: mapping moments of no pressure to rests, pressure to held notes, and intensity to pitch. In this way, the pen becomes a vehicle to transfer the activity of drawing into music generation—using only abstraction! It could be a playful gimmick for children or a thoughtful art installation.

Dimension: Vertical

To reach both the conceptual and intuitive spaces of the previous two dimensions, you might have noticed the mechanism of raising the level of abstraction. Conceptually, this mechanism happens on a vertical dimension—from a little (low level) to a lot (high level) of abstraction. This dimension is closely related to Floridi's method of abstraction 1, which speaks about levels of abstraction inside a nested gradient of abstraction.

While the first two dimensions were concerned with the cognitive activity of abstraction—disassembling concrete representations and empirical realities into general ideas or possible intuitive associations—we now look at the first of two structural dimensions that shape a system through the use of abstraction. This can be understood quite literally as a dimension through which the system can be organized and navigated. I will begin by presenting the first of the two now.

Think of it as a shift in perspective. From this perspective, we have a collection of components, perceived as individuals. In terms of Floridi's Method of Abstraction, they present observables. Let us, for example, describe what we see here:

Here, the observables are the motion on the Y-axis and the changing color from a desaturated blue to a bright red, in different intensities and frequencies—sometimes slowly pulsating, sometimes blinking. Let us describe the system: a row of moving squares (arranged along the X-axis) blink, shifting from a desaturated cold color to a red color. Most squares move at the same Y-velocity and blinking speed, but have randomly assigned initial blue tones. The second, sixth, and ninth squares from the left move slightly faster, even faster, and much faster, respectively.

The point is, it is very difficult to describe the behavior of the system as a whole by focusing on the behavior of individual components and relating them to the whole.

Now, without changing any of the observables (color shift, Y-movement, arrangement in a row), we bring them into a cohesive collaboration by raising the level of abstraction and converging the change of the many into a collaborative whole to implement a new concept.

Now let's describe this: a row of ten horizontally arranged squares move in a wave, both in their Y position and their blinking behavior.

This is when we can literally embody the saying, "The whole is more than the sum of its parts." Only by climbing to a higher level of abstraction was it possible to represent a wave with many individual parts. Conversely, it was only possible to implement the behavior of a wave by finding a rule that works on the simpler components that express qualities along the Y-axis and through changes of color.

We move cognitively between levels of abstraction to tackle complexity. We move up to remove details and increase context; we move down to increase detail and reduce context. At any level, different information about the same system becomes relevant. You probably first checked the title of this article, then moved down a level of abstraction to look at the table of contents or skim the headlines, and only then started reading.

I would like to compare the vertical dimension to classes in programming. The parent class has fewer observables; as such, it is relevant for a broader context, while subsequent child classes become more specific by introducing new details, making them more context-specific and useful, yet less malleable and reusable.

We apply vertical abstraction on a daily basis, and the idea of verticality in abstraction comes very naturally. This was—at least for me personally—less intuitive when I first came across its complementary horizontal dimension.

Dimension: Horizontal

- Interface in Object-Oriented Programming

Again derived from Floridi's Method of Abstraction, when we take the same observables inside the same level of abstraction, but only consider some while replacing others.

Here we see the perspective presented in the section about vertical abstraction. Now, instead of converging observables or increasing context, let us fix some observables and replace others (abstraction) to shift the perspective.

While this might look like a completely different system, we can still recognize the same nine squares. However, now we see them displaced along the Z-axis—a dimension introduced through the shift in perspective. By doing so, we also removed the observable of movement along the Y-axis. We can, however, associate them by their position along the X-axis and their blinking behavior given by the color shift. This is a horizontal abstraction of the same system!

The official name for this is a "Disjoint Gradient of Abstraction." It is the intersection of a set of observables, or a shift in perspective, or the partial observation of the same system from a different angle or in the context of a different purpose. New useful features come alive while others are hidden for the intended shift.

This is the polymorphic behavior of interfaces in programming. The interface defines the collection of features an object needs to fulfill to be useful inside a specific context. It can have multiple such contracts simultaneously. Let's take a bottle. The same object can have different horizontal abstractions:

IHittable: Here, we are only concerned about the nature of the bottle when it is hit. Is it a glass bottle? How strong was the hit? What was the density of the hitting object? Will it now shatter?IOpenable: Here, we are only concerned about the bottle being an object that can be opened and, as a consequence, closed. What is the opening and closing mechanism? What motion needs to be applied?IFillable: In this abstraction, the bottle becomes a container for substances. Is it solid, like rice? Is it liquid, like milk? How big is the bottle, and therefore how much capacity does it have?

Applicability

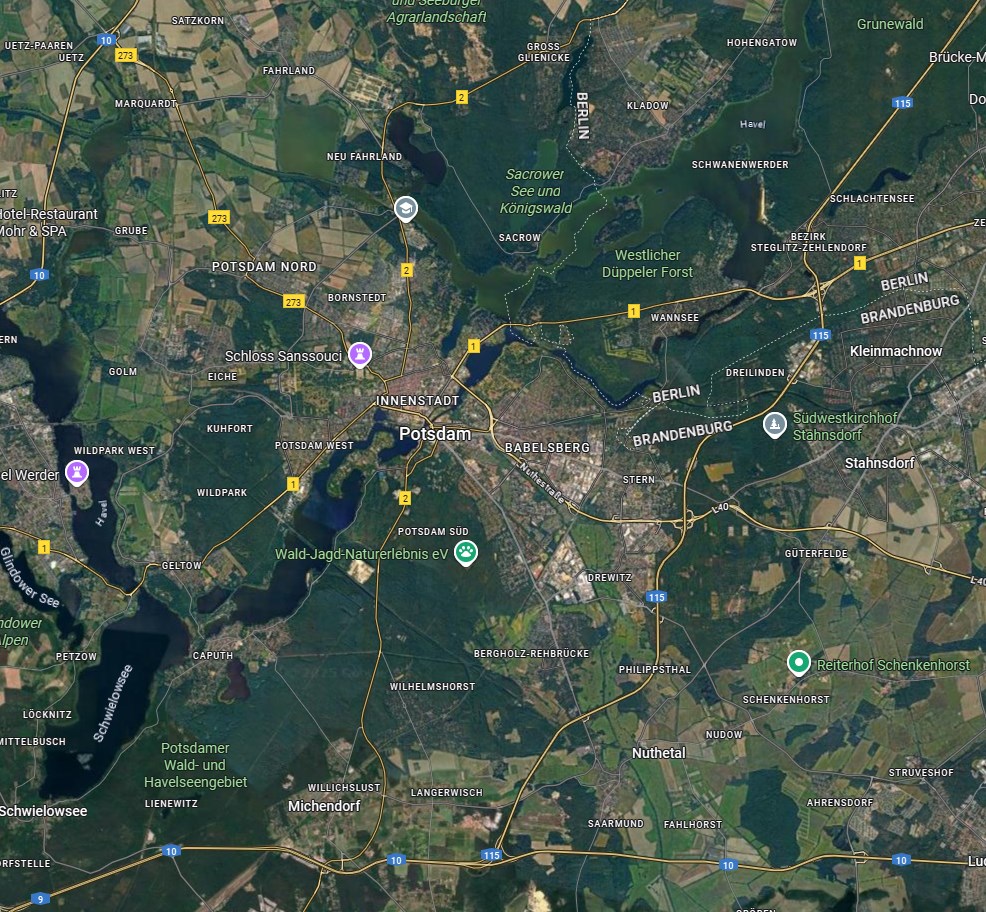

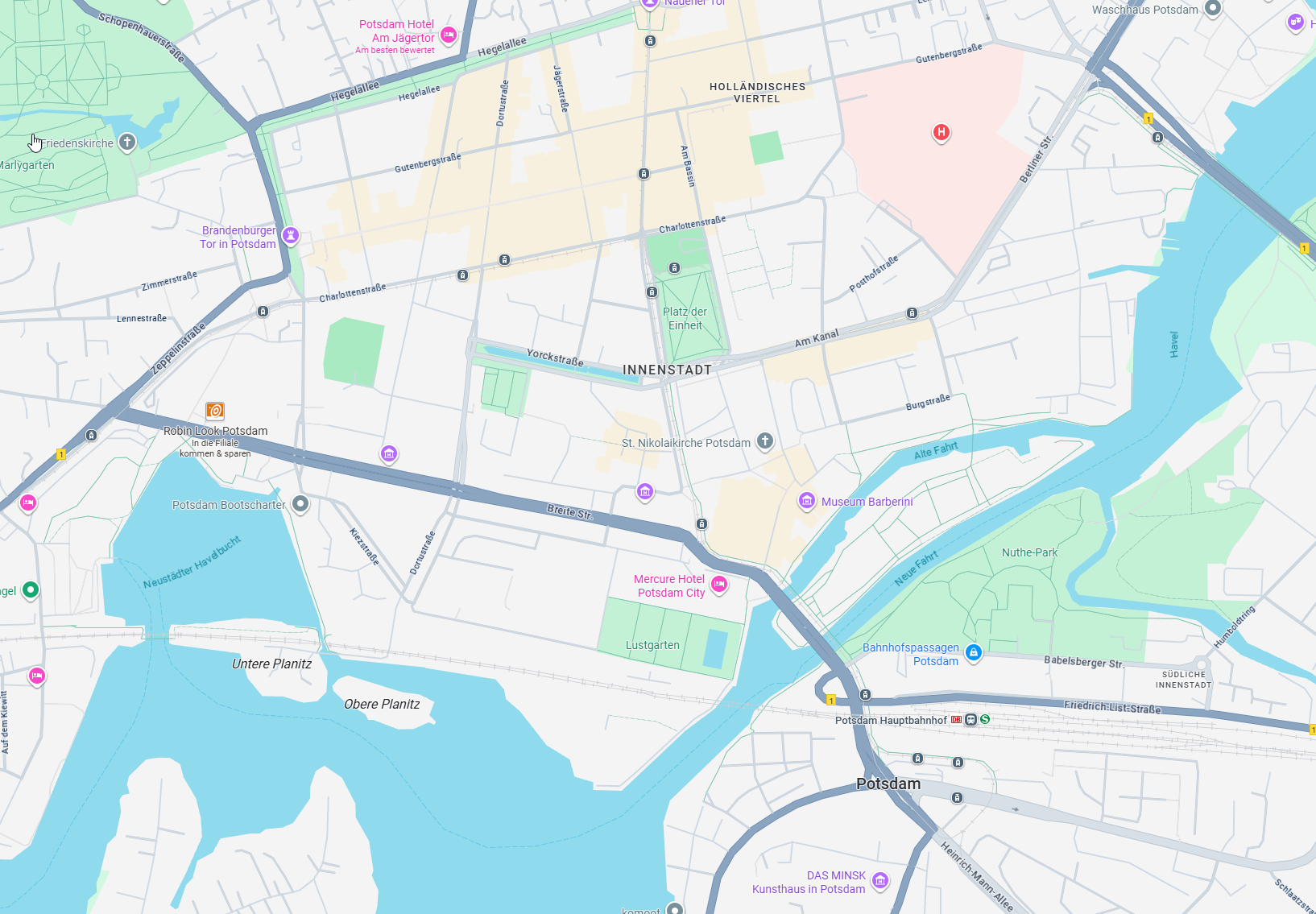

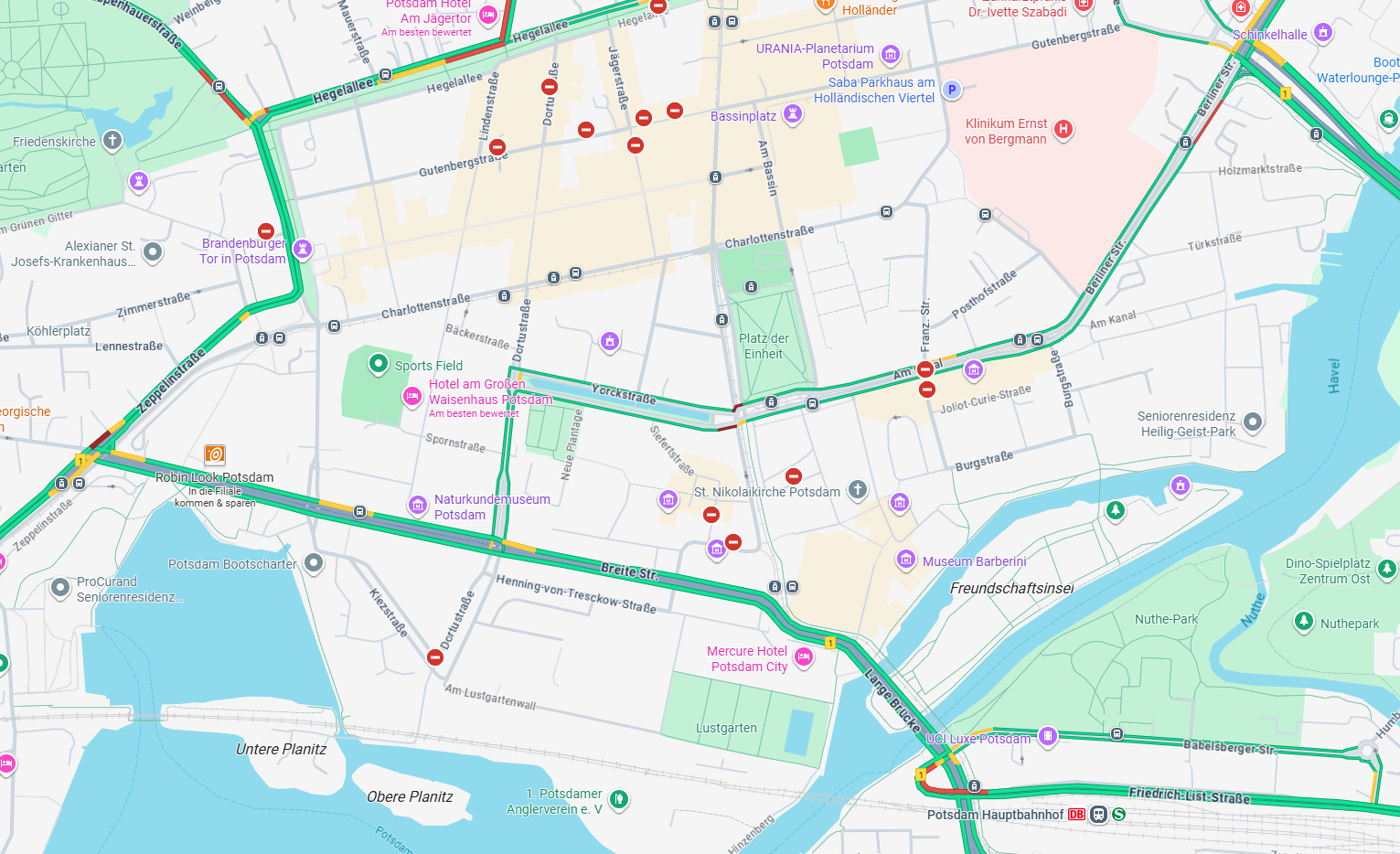

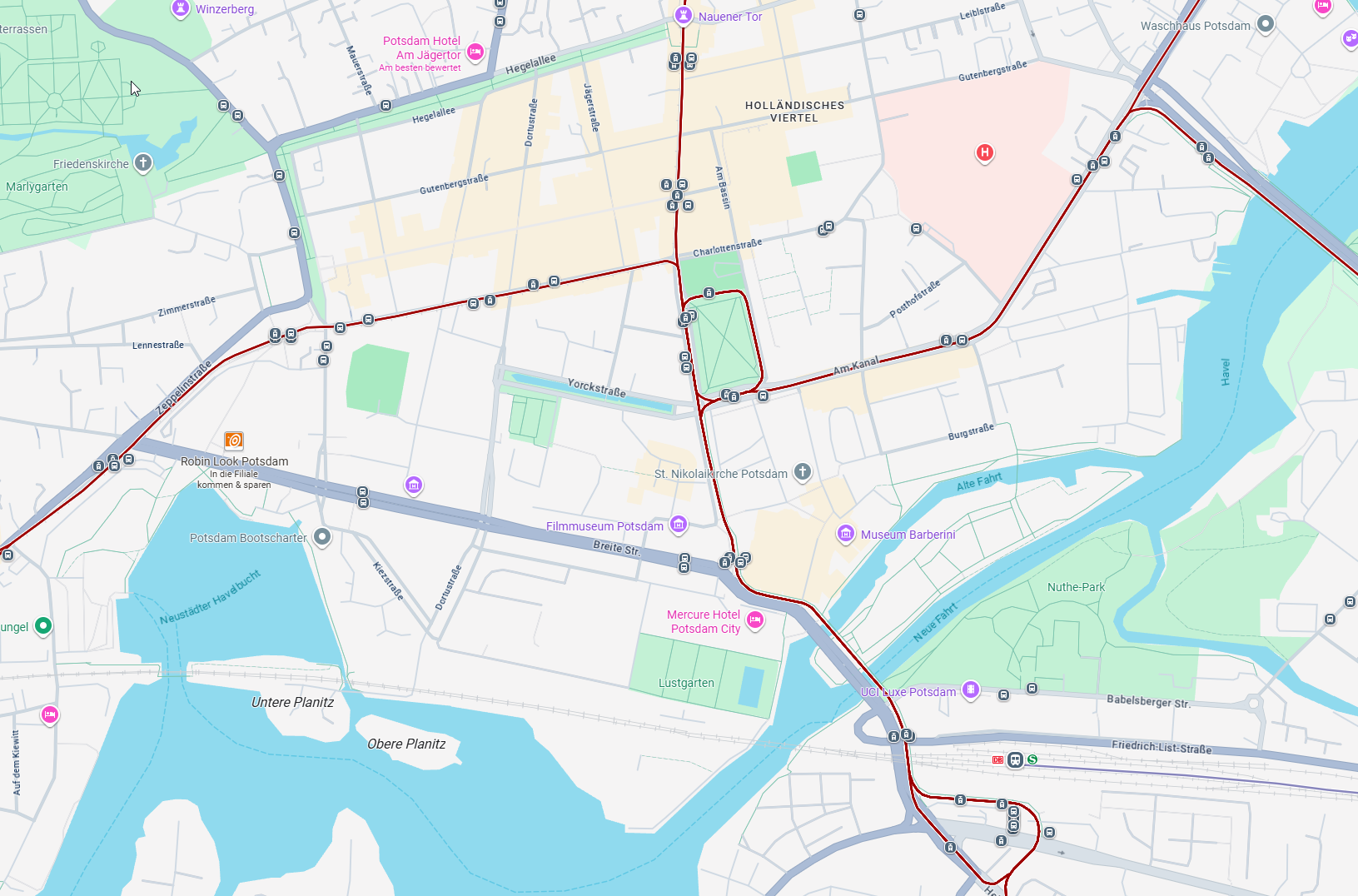

Since these two dimensions are complementary, I would like to showcase them together using the same example: a map, which is itself a representation (abstraction) of real geographical data with many observables.

I live in Potsdam, so I will use this city to make the article a bit more personal.

That is a lot to process. Let’s use vertical abstraction to zoom into a region. By moving down a level of abstraction, we decrease the context—essentially, zooming in.

Much better—or is it? You might think this is the appropriate use of the vertical dimension. While we have decreased the context, the new image is arguably just as cognitively loaded as the previous one. We still have the same observables, just a different geographical scope. Let’s call this Level 0. Let’s try again.

Now we’re getting somewhere. Using the same framing, we simply remove some observables—the labels. This creates an abstraction unconcerned with the names and functions of buildings, streets, and districts. It’s a purely photographic perspective: Level 1. Let’s increase the abstraction further.

(Unfortunately, Google Maps doesn’t allow for this view without labels, so please imagine them removed for the sake of the example.) We have abstracted away the real buildings, which previously formed a visually complex pattern, drawing too much attention with all their details and consuming cognitive effort just to parse. What remains is a more abstract visual representation of the city: Level 2. This enables us to visualize shapes and proportions—such as water versus land. We can clearly follow streets and see the conceptual structure of districts and the city’s contours.

Now, staying at Level 2, let’s shift horizontally by introducing new or overlapping observables.

This overlay adds traffic information: colors on main streets indicate congestion, and icons show restricted zones. Let’s call this Level 2 Traffic. (Strictly speaking, this isn’t a pure horizontal abstraction, since it only adds observables without removing others, but I prefer to keep the metaphorical consistency.)

One final example:

This overlay highlights public transportation lines, tracks, and stops. In this abstraction—Level 2 Public Transport—the city becomes a traversable network.

To put this example in the full context of all the dimensions: we are moving within the representational and analytical spaces, first selecting a suitable vertical level through the vertical dimension, then switching between horizontal abstractions on the horizontal dimension.

Abstraction beyond programming

To understand the central piece of my thesis, it is important that there are no misunderstandings about what is meant by abstraction up to this point. Allow me to recapitulate. While we have explored many definitions and ideas surrounding abstraction, we need to return to a central definition from which we can work. Abstraction is the process of purposefully removing features (to abstract); yet, by doing so, we create an associated object linked to the original (an abstraction), so that there can be multiple abstractions along different dimensions: representation to concept, logical/analytical to intuitive, vertical, and horizontal.

The best example was the pen. Instead of focusing on the question "How does it make signs?", we turned it into an abstraction by saying "a device that can make signs." The pen exists as that very specific example in front of us, but it also exists as multiple different abstractions simultaneously inside the multidimensional space of abstraction's dimensions.

In programming, abstraction is used to find common interfaces and make solutions reusable. As we saw in the very first section, this is commonly done by inheritance and polymorphism, which I have linked to vertical and horizontal abstraction, respectively. Abstraction helps decompose hard problems and complex systems into smaller parts, then provides the glue to fit them back together, while ensuring firm boundaries with moving parts. This happens on the semantic level of code—the act of programming.

The visible syntax of a language must support writing language mechanisms of abstraction like classes, interfaces, and type variables as structured tokens, yet they are all concepts. But syntax, free of any semantic association, is just text—a form of notation that helps us structure ideas and processes into hierarchies and relationships: declarations, names, labels, structures, indentations, and parentheses. On this level, we can use vertical abstraction to understand a module by skimming over its function names, and we know exactly where to find a rule about our system by diving deeper—ideally in one place! This is what the Single Responsibility Principle dictates.

We need to make the cognitive leap and accept this dual nature of code. On one side, it is the syntactical translation of our programming intent; on the other, it is just a visual notation system in text form. The same is true for the graphical user interface (GUI). A GUI is also a visual representation of a function. In fact, the way we build GUIs is by binding functions to graphical components and giving access to the input parameters of those functions—either explicitly, by providing sliders, buttons, and textboxes in a separate panel or window, or in a more experiential way, by allowing other forms of interaction, like moving a gizmo in the editor or triggering a function based on a certain action in the GUI.

As the documentation of TouchDesigner claims1, the interface for any application is a type of visual metaphor. And as we have seen, a metaphor is an abstraction. Another concept related to this is affordance. Originally coined by James Gibson and later adopted by Don Norman for interaction design2, affordance is defined as the relationship between the qualities an object has and the abilities these qualities express in regard to how to use them—an interface: an abstraction. More specifically, I like to think of affordances when we recall the pen example. When we thought of the pen as the device that makes signs, we were thinking in terms of affordances. This also becomes important when we talk about representing intent, especially in the GUI.

The Cognitive Dimensions of Notation Framework

One of my main claims is that, based on abstraction, we can manipulate the cognitive effort an object or its representation requires. As such, I looked for a way to classify cognitive qualities to have even more ways to express the effects of abstraction.

The Cognitive Dimensions of Notation framework1 is, in fact, very much related to programming and GUIs, as it examines and defines dimensions or qualities of visual coding environments.

These are:

- Viscosity: how difficult it is to move or change existing functionality

- Visibility: the ease of viewing components

- Premature Commitment / Provisionality: constraints on the order of an activity (the antithesis of iterability)

- Hidden Dependencies: important relationships are not visible

- Role-Expressiveness: how easily we can infer the function of a component (affordance)

- Error-Proneness: how readily the representation invites mistakes

Abstraction: This one is tricky inside a whole thesis dedicated to abstraction. But it refers to our horizontal and vertical dimensions more than anything. I like to call it Depth of Control instead.- Secondary Notation: the ability of the primary function of your notation to also help provide visual structure

- Closeness of Mapping: how well the concept is mapped to its representation

- Diffuseness / Conciseness: two polar opposites—how much information (how many observables) are required to understand a component

- Hard Mental Operations: how many steps need to be committed to working memory to maneuver a component

- Progressive Evaluation: how easy it is to have a partially complete result which can be evaluated as a whole

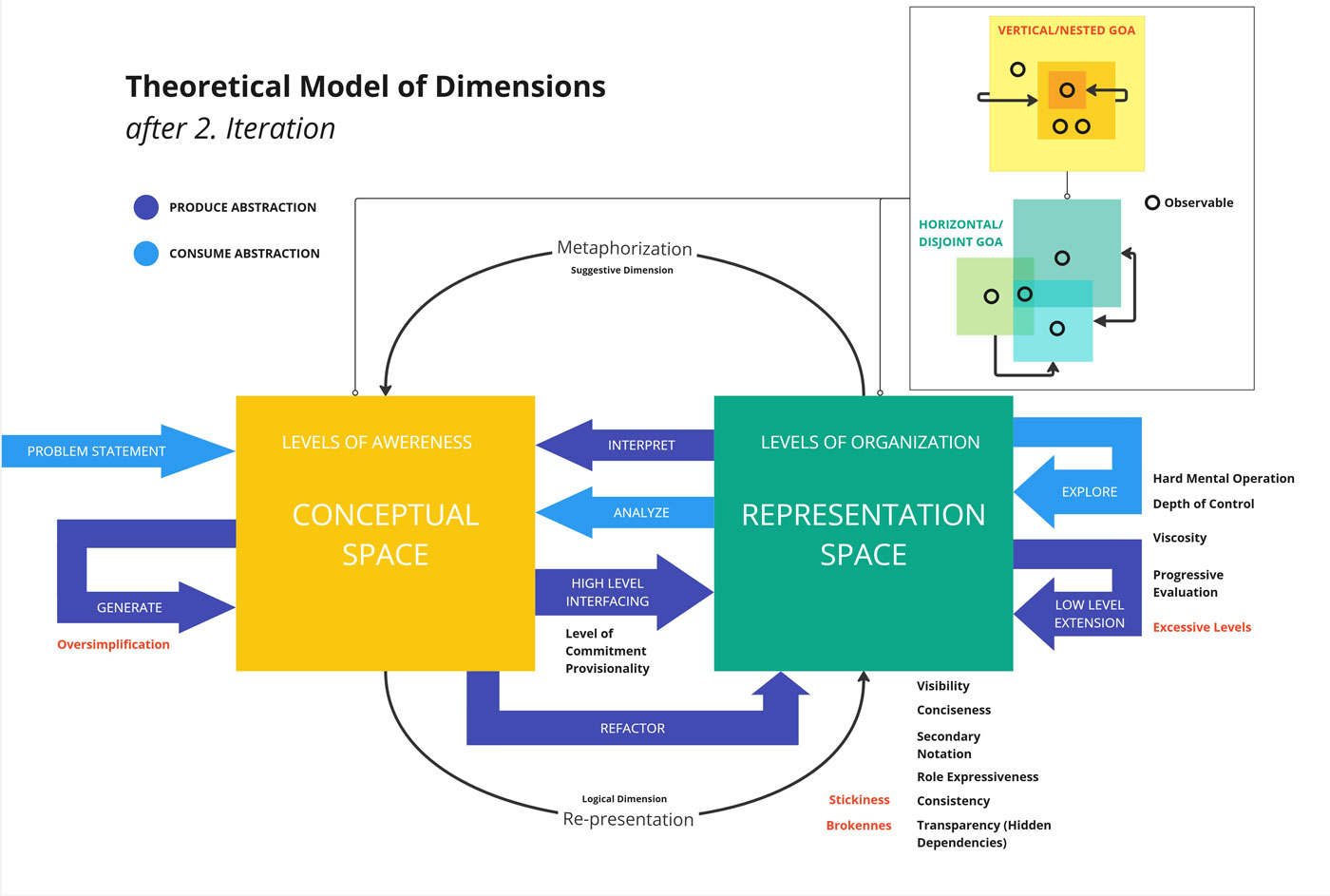

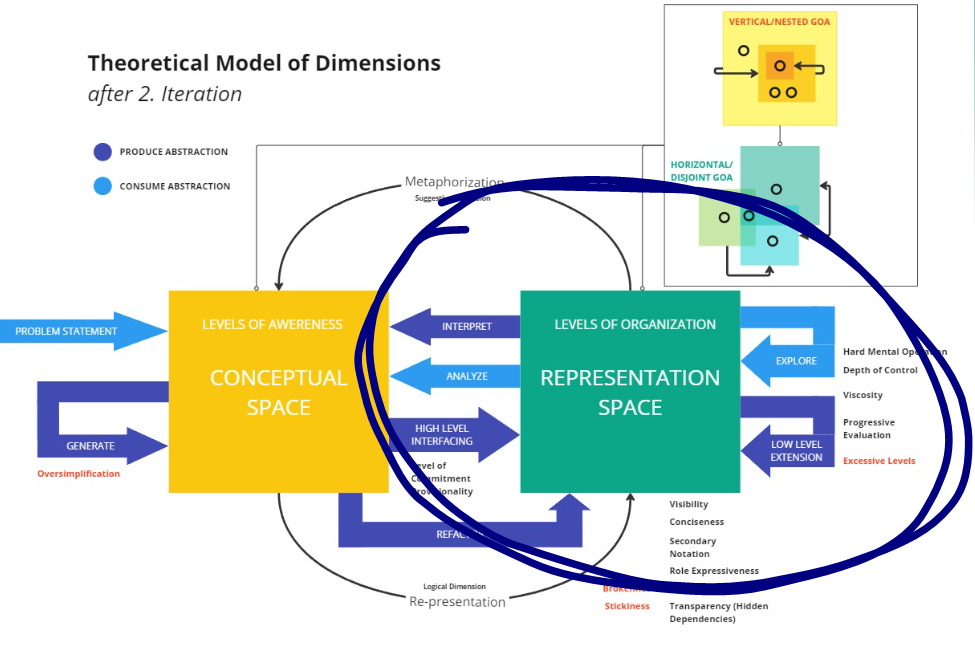

An Actionable Iterative Model

Finally, before dedicating the article to one last example, I made the effort to visualize the dimensions and reframe them inside an iterative loop, derived from UX design and development, with steps like: Conceive, Prototype, Implement, Evaluate, Analyze, and back to Conceive. This also integrates notions from creative cognition, like the Geneplore model, which is a smaller loop of a generative phase and an exploration phase. I also added a few additional steps I deemed necessary for completeness.

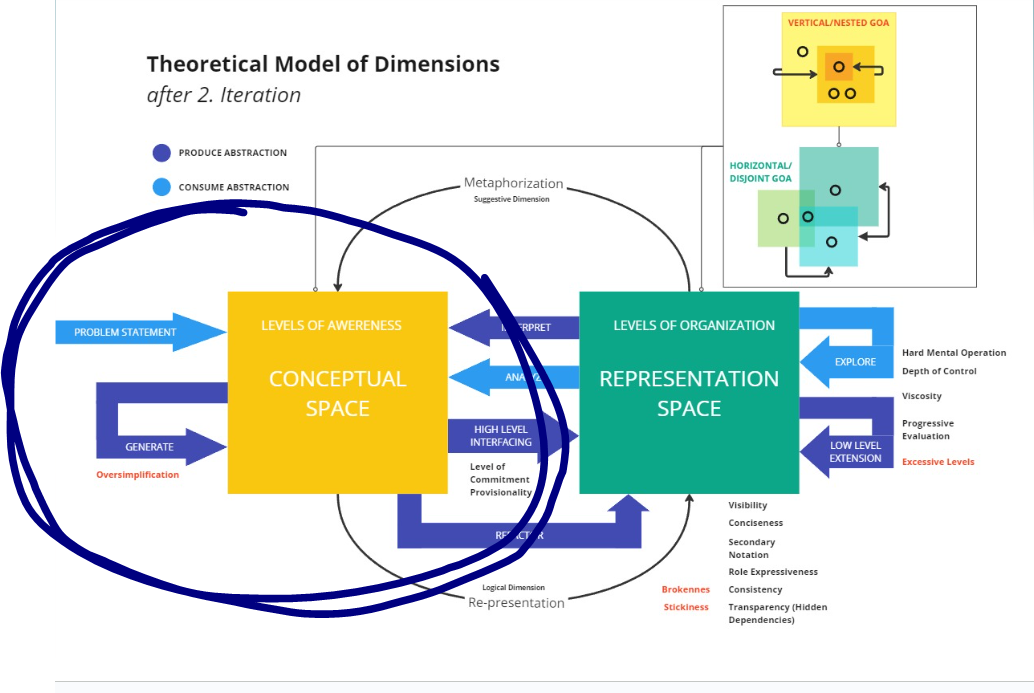

We have the main dimension of the conceptual space and the representational space. As we remember, abstraction both helps us move between them and also takes place inside them. To evaluate the effect of the representation, we use the CDNf. Orthogonal to these two is the Analytical Thinking mode inside the Logical Space, which connects and can take place both in the representational and conceptual space. And the intuitive space, in which we associate freely. We also associate two verbs with this dimension: re-presentation (moving a free idea to a concrete reality) and metaphorization (observing an object in reality and associating it freely with other concepts). What I failed to show even more is the vertical and horizontal dimension. Namely, while moving inside the conceptual, representational, logical, or intuitive space, we do so in terms of vertical abstraction, and inside the different vertical levels of abstraction, we shift to different horizontal levels of abstraction.

Think of our maps example inside the analytical and representational space. Think of the levels of awareness inside the conceptual and intuitive space. The little circles are an abstraction for observables, and the vertical and horizontal levels are shown as intersecting abstractions (disjoint gradient) and nested abstraction (nested gradient).

The model can be seen as a mapping of actions to move between these dimensions using abstraction in an active, producing role, or in a passive, consuming role. One invents or transforms features to new abstractions; the other reads them to reconstruct an intended abstraction. For example, refactoring applies a new conceptual organization to an existing representation, changing the underlying abstraction. Low-level expansion, or implementation, is when we add lower vertical levels to add more detailed rules to our system, replacing a simpler or empty implementation.

I think we have now built a sufficiently expansive vocabulary to talk about abstraction in all its forms and articulate its effects. Let us move to its application in the context of content creation engines. I chose the term content creation engine because it is yet another abstraction, which elevates game engines into a more generally applicable purpose: any content. This can be generative art, games, or films—or, more specifically, the tools for virtual production, which we will look at now.

The Shotlist as Sequencer: An Abstraction for the Pre-Production Workflow

Picture this: we had to produce a collection of pilot episodes for a surreal TV show set in a room with a fictional city and extreme weather scenarios reflecting the characters' moods. The final product turned out well and even started to gain some traction.1

At the time, the production team and director decided on a static set design—a generous glass facade with an LED wall behind it.

I was brought onto the team after the goal was set: accelerate the production timeline using virtual production. Our small team consisted of two VFX artists (responsible for weather and city, among other things), one Unreal operator (who, together with the director and cinematographer, blocked out every scene, beat by beat, to enact the script, find potential pitfalls, and explore dramatic camera angles), and a technical producer (ensuring our technical team could work independently and efficiently). My role was infrastructure: managing machines, remote connections, version control, server setup, project organization, and developing tools to help the VFX artists and operator work faster and avoid repetitive or impossible manual tasks. I had never done virtual production before, but I relied on my Unreal Engine expertise and confidence in my ability to code my way out of any problem.

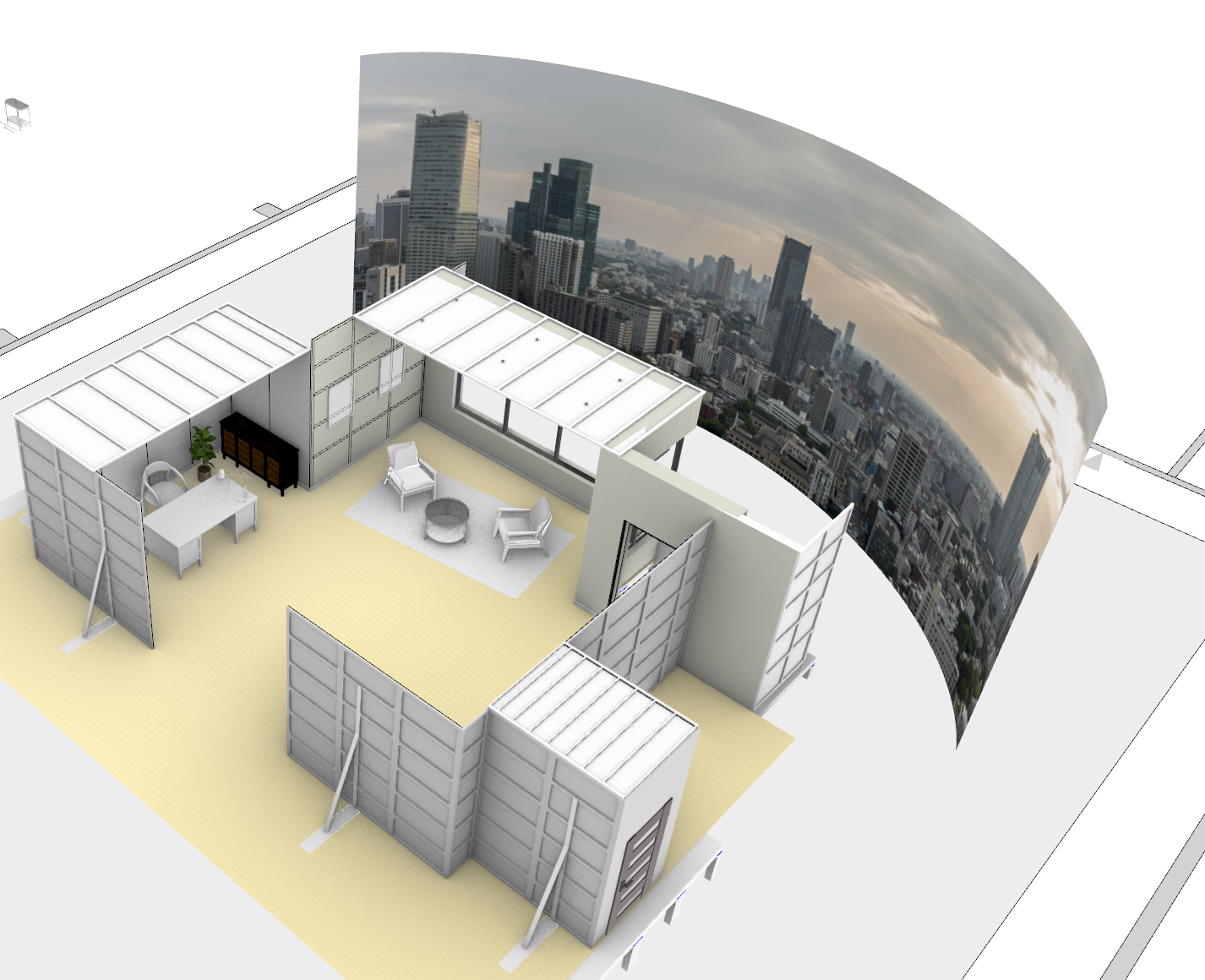

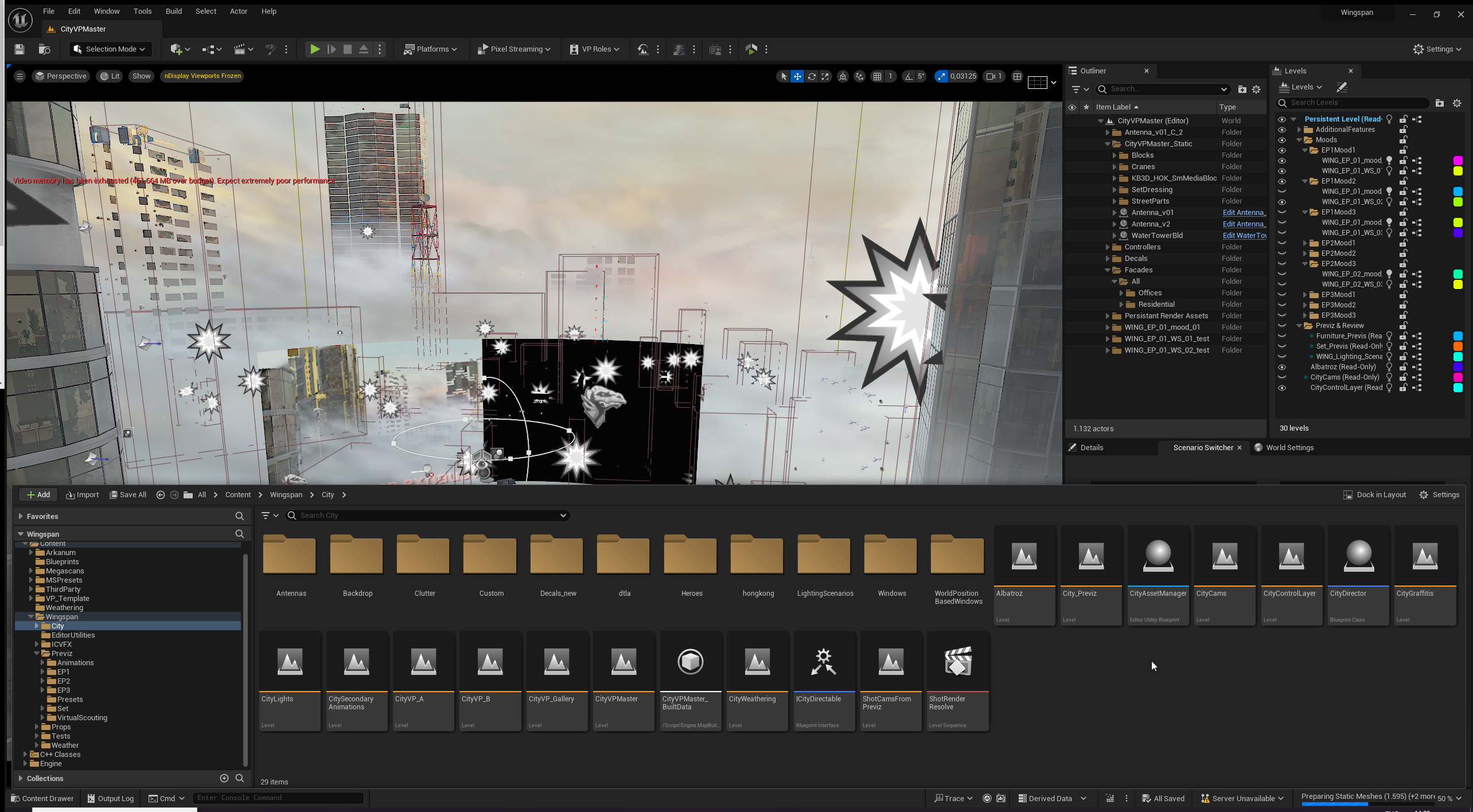

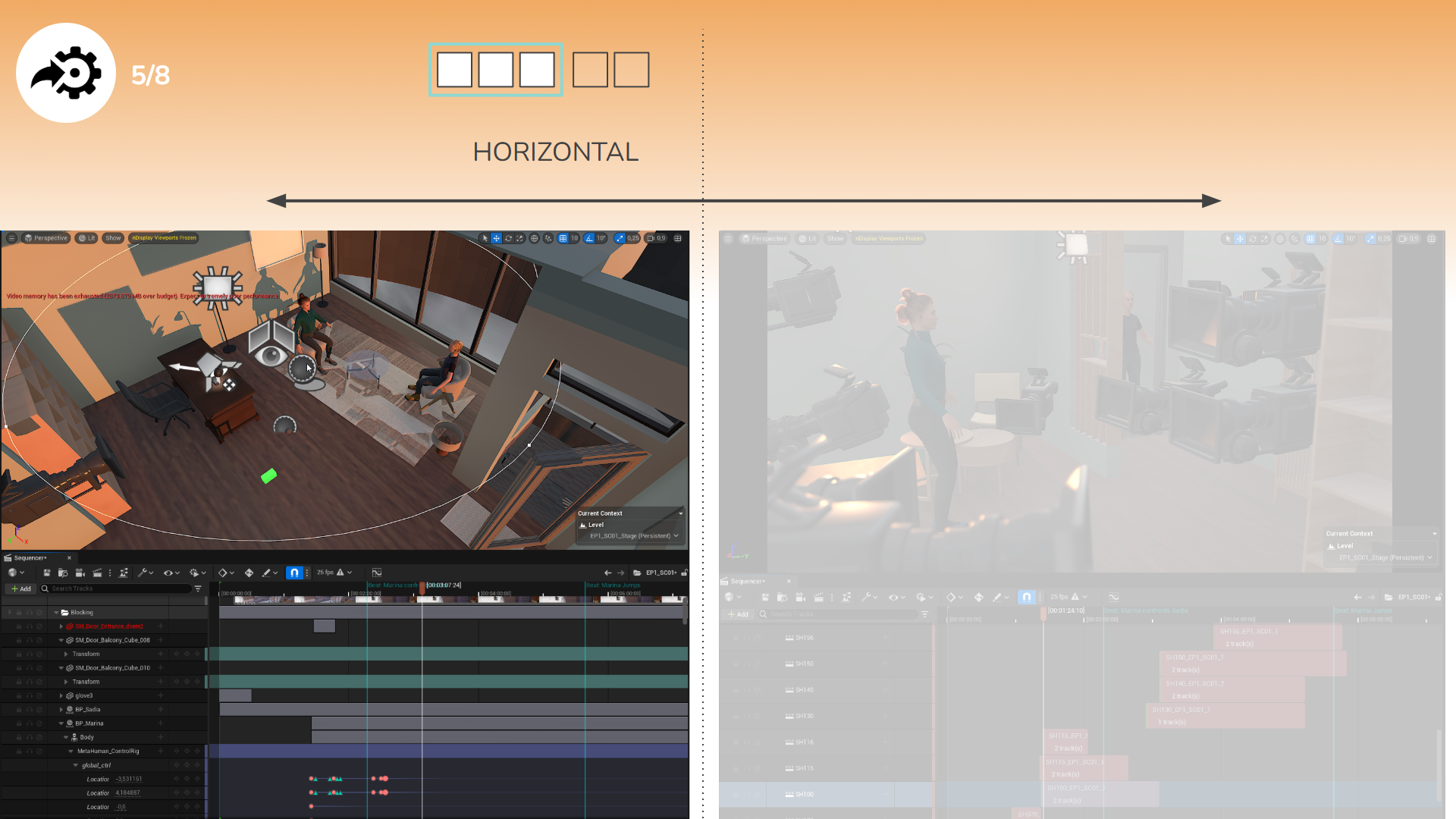

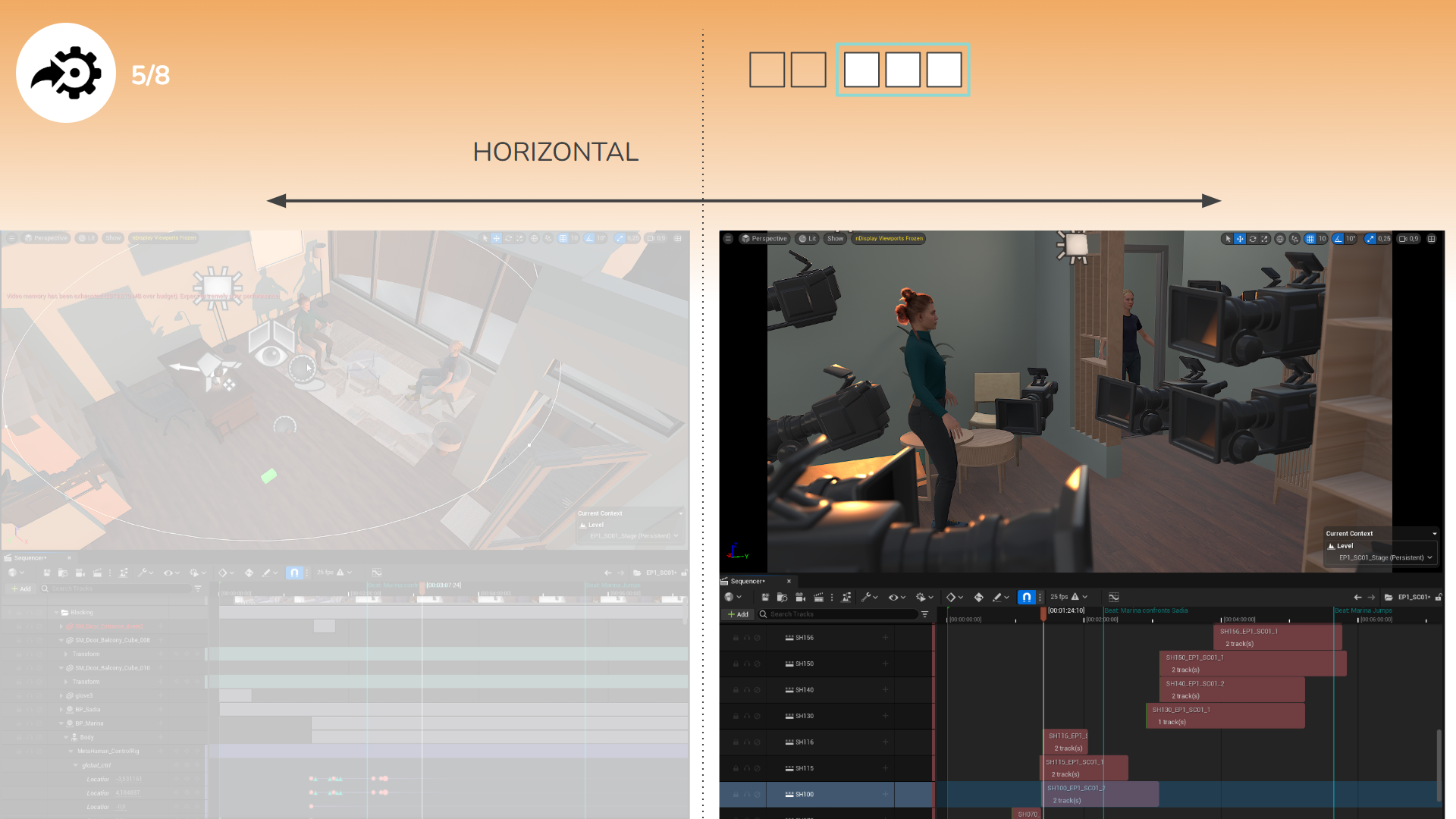

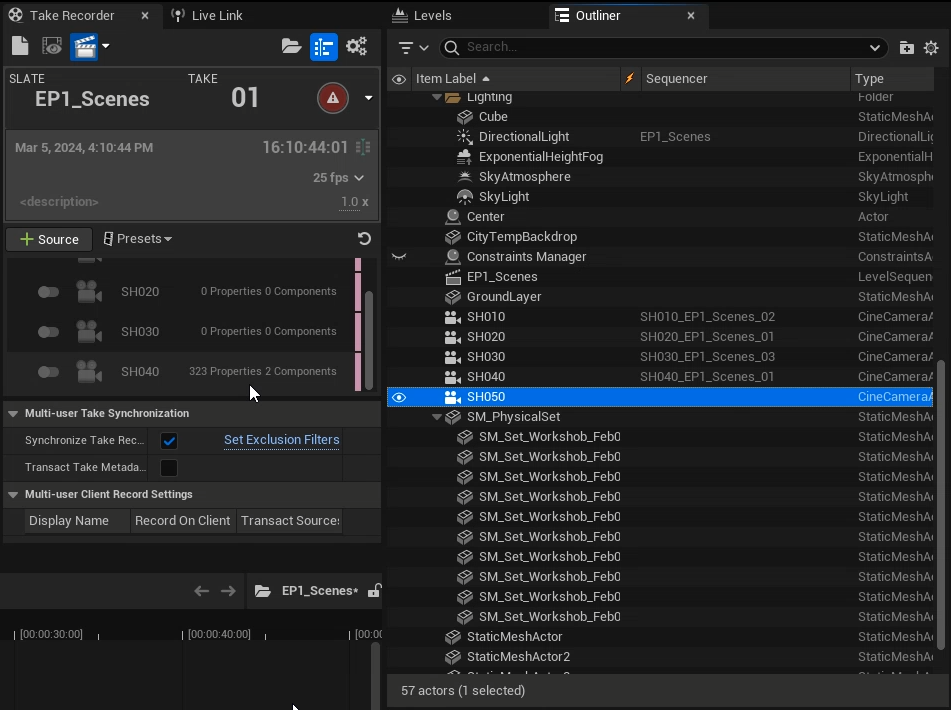

For reference, here is our final virtual set for pre-production and the real set with in-camera VFX. What you see is what you get.

In my thesis, I showcased the full process, often mixing abstraction dimensions and cognitive dimensions. Here, I’ll clarify by splitting my process into two sub-sections: first, abstraction in the conceptual space, then in the representational space.

Conceptual: Connecting the Dots

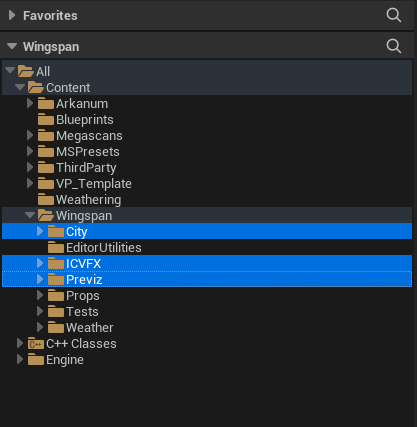

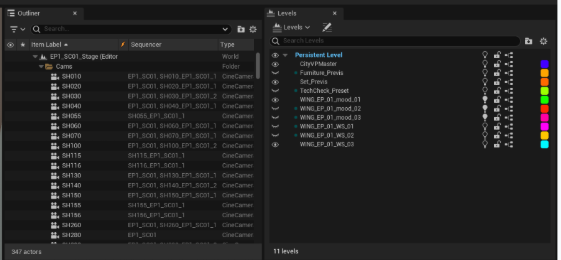

It was clear to me that the project was composed of multiple levels of abstraction. I like to structure content by client—in this case, Arkanum Pictures. This is where I put reusable tools and assets for future virtual production projects. There was also some noise from external systems: Megascans, MSPresets, Blueprints, Weathering, and VP_Template. The "Third Party" folder collected external assets not directly tied to our project. At this highest level of abstraction, I focused on responsibilities and project ownership, in case we needed to migrate components.

Now, the core of the project: the actual movie project, Wingspan. It was split into a City (constant for both Previz and ICVFX on set), crossed with different weather scenarios and filled with props. We also developed specific editor utilities for city and weather workflows.

ICVFX and Previz were two horizontal abstractions of our workflow, both centered on the city. While I crafted tools for one, my operator worked on the setup for the other. The best abstraction mechanisms for project-level organization were streaming levels and subfolders, so Weather, City, and the two workflows could coexist without hidden dependencies or conflicts.

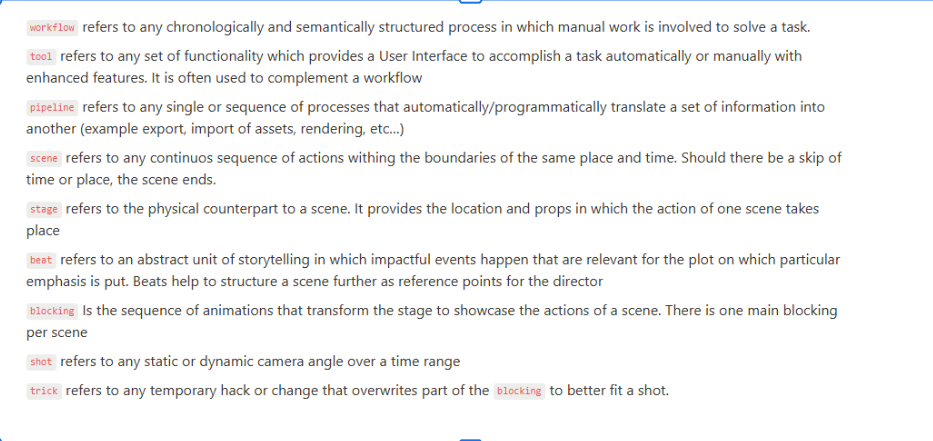

With the main structure built, I focused on workflow and tools. I started by clarifying terminology with my technical producer, Jonathan, breaking down every concept and mapping relationships. From a high level of abstraction, I used logic to connect concepts and map out the workflow's requirements.

For the team, I defined "workflow" and "pipeline"—horizontal abstractions of each other. Workflow involves manual steps; pipeline involves automation and data translation.

Other distinctions included scene vs. stage, and scene, blocking, and beat. Stage and scene are horizontal abstractions: the stage defines props and spatial requirements, while the scene enacts script events. Blocking represents the script in the scene, while a beat is a higher-level abstraction dividing the scene by dramatic moments.

Finally, shot and trick: a shot is the camera framing of an event, dynamic or static; a trick is a temporary stage adjustment for a shot (e.g., removing a lamp that occludes an actor or timing a flock of birds to fly by the window).

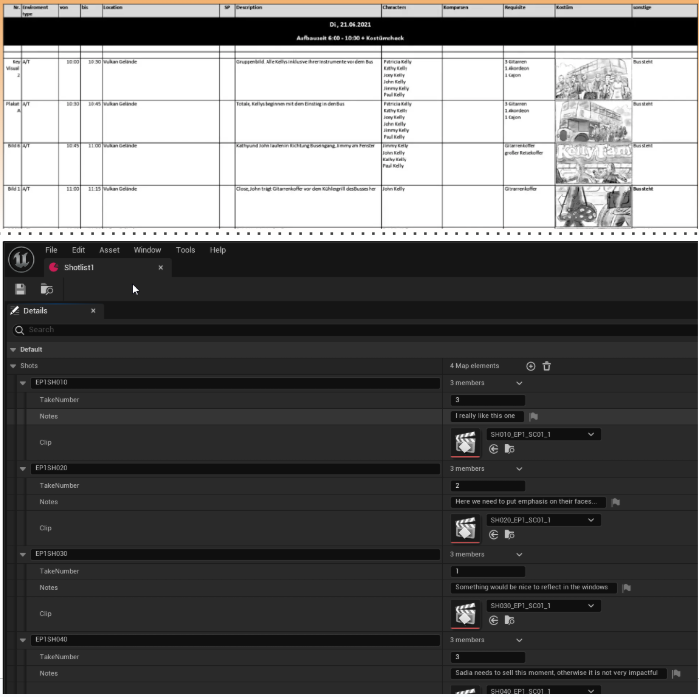

With this knowledge, I analyzed a shotlist and implemented it as a data structure in Unreal Engine. My idea, similar to streaming levels, was to use references converging into a master object. I programmed a data object to collect all shot references. The first mockup resembled a shotlist.

I created a tabular form listing shots linearly, with shotlist names, take numbers, director notes, and associated level sequences. However, let me illustrate the problems using the Cognitive Dimensions of Notation (CDNf):

- Closeness of Mapping: The representation matches the shotlist, and we can render previews efficiently. But we’re missing the scene, especially in relation to the stage. Blocking, framing, beats, and tricks aren’t visible unless we open each sequence in its map.

- Visibility: This workflow is disjointed.

- Hard Mental Operations: Modifying a shot requires remembering how other shots ended, risking continuity errors and high error-proneness.

- Role Expressiveness: The relationships and functions of shots are unclear.

This was a less-than-ideal solution. I started programming from the pipeline perspective, aiming for automation, and projected the shotlist concept into the representational space. But I didn’t fully account for all necessary concepts. We needed to understand existing tools and project them back into the conceptual space to match each abstraction with its representation.

Starting from a problem statement (an abstraction), I generated connections and simplified definitions, then projected the shotlist into the representational space as a high-level interface—a data object linking manual work in the map and the automated pipeline.

Representational: Repurposing Systems

I was unhappy with the initial design. Even without virtual production experience, I sensed this would be a disaster. I couldn’t yet articulate the need for clear levels of abstraction and their impact on cognitive dimensions, but my intuition told me something was wrong.

For a week, I experimented with Unreal Engine’s workflows for organizing sequences and recording shots. I aimed for seamless integration of workflow and representation, exploring the sequencer’s potential for creating horizontal and vertical boundaries, adding details, and replacing shots and tricks. At first, I was only analyzing the system, not yet connecting the shotlist concept, sequencer, workflow, and pipeline.

My technical preconception was that a sequence equals one shot. Previously, I represented shots as level sequences referenced in the data object. The key insight was realizing I could record shots directly into the sequence. Each camera could represent a shot, and binding the camera in the sequencer formed a track. I began to metaphorize the shotlist using the sequencer.

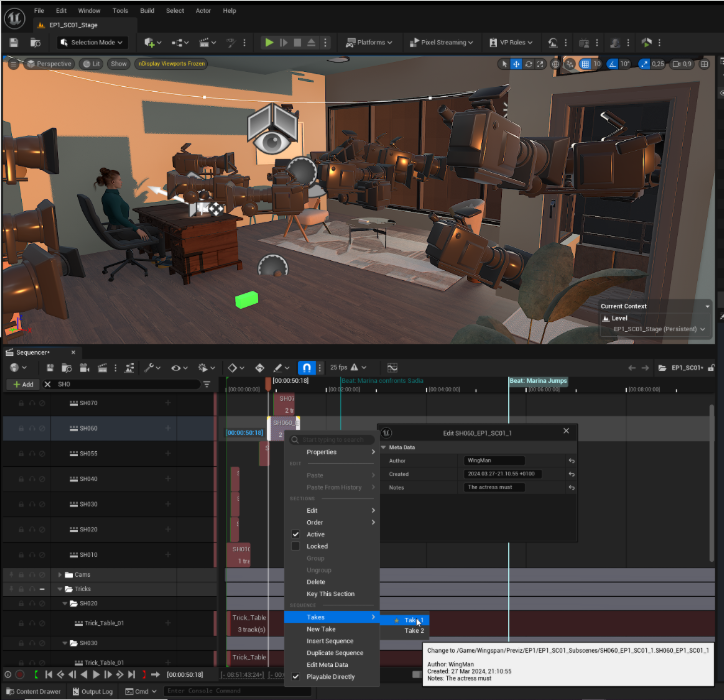

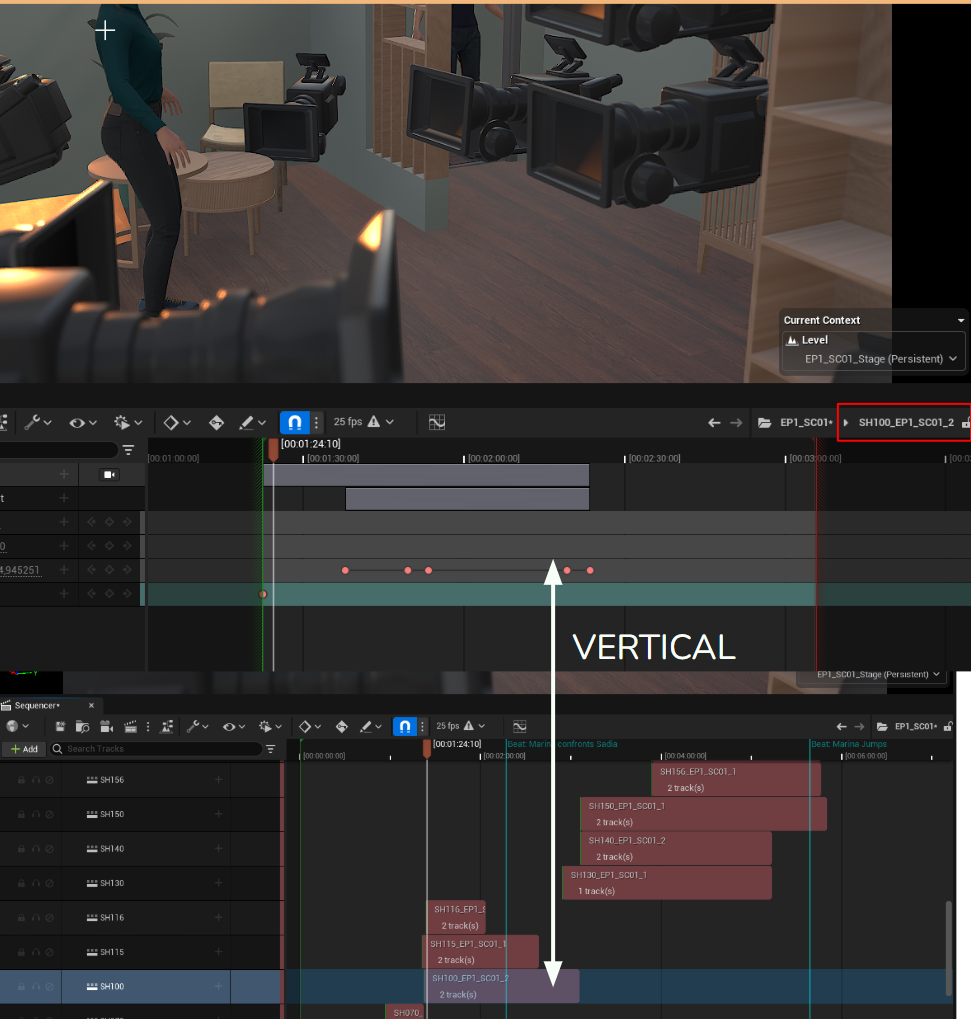

The sequencer is a powerful, clean interface, though it doesn’t show the entire shotlist at once. In this vertical slice, I can illustrate the components:

- Closeness of Mapping: The scene is represented as the full level sequence, containing shots as camera tracks. The stage (the map) shows the physical positions and blocking. Markers represent beats. Tricks are track folders named after shots, containing temporary changes (spawnable possessables or position overrides). I wrote an algorithm to mute all other trick tracks based on the selected shot. This representation became the main workflow canvas, directly producing the final data structure. The pipeline is missing here, but I’ll cover that next.

- Viscosity: Adding or removing tricks was effortless—just move items into or out of a trick folder. Shots could be replaced via an integrated solution: a shot bound as a subsequence track, with matching names allowing the engine to list variants in a dropdown.

- Visibility: Everything was visible—stage, scene, shot relationships—reducing hidden dependencies and error-proneness.

- Premature Commitment/Provisionality: The representation was workflow-first, allowing artists to express themselves freely, as long as they respected the established abstraction.

- Role Expressiveness: This representation integrated with familiar abstractions and their feature sets. Shots were tracks and physical actors, so any tool for actors or tracks could be used. I leveraged this to extend the workflow further.

- Secondary Notation: Supported via markers (for beats) and a metadata window for each shot (editable via right-click), useful for director notes.

- Progressive Evaluation: At any point, the current scene state could be previewed in the viewport using the main camera cut track—no rendering needed. We could stop at any time and have a complete state.

- Diffuseness/Conciseness: The only real weakness was the diffuseness of a long shotlist—not all shots could be visualized at once, but I addressed this in the next step.

Some points remain: What about the cognitive dimension of abstraction (depth of control) and hard mental operations? What about the pipeline? And how did this lead to the animatic and final shotlist?

Levels of Abstraction in the Workflow

Let's address the Cognitive Dimension of Abstraction, or, in our case, Depth of Control: the ability to extend the workflow across its levels of abstraction.

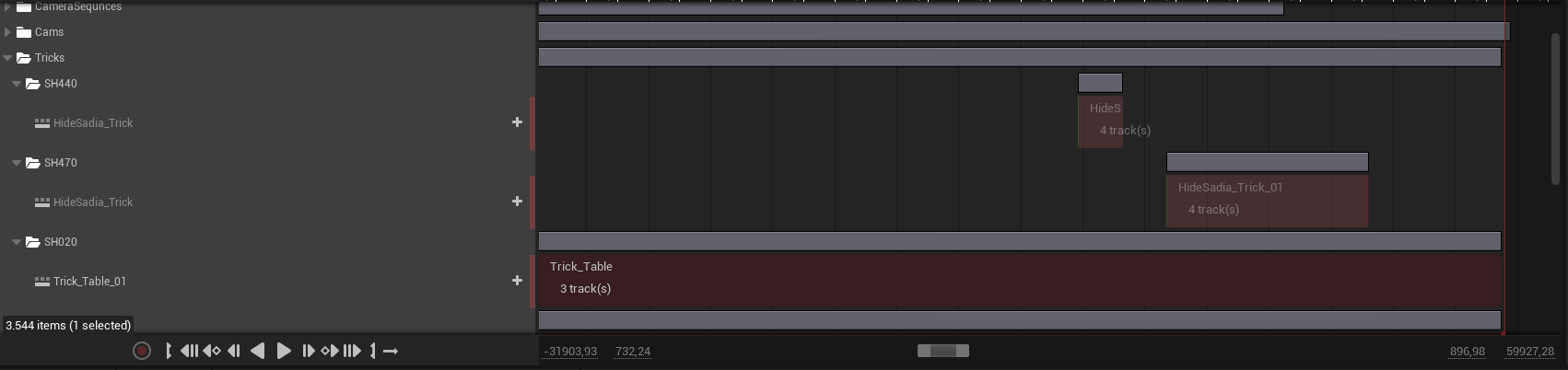

Remember, levels of abstraction in our model exist in both the horizontal and vertical dimensions. From our conceptualization, we established that blocking is the physical enactment (representation) of the events in the script. It is the line of action, free of any perspective, framing, or composition. The important observables are the positions of all actors and props at a certain moment in time, and the pacing of those changes in relation to the storytelling.

This, however, then becomes the content for the shots to capture. I called this workflow the framing. I noticed, once again, that—similar to how stage and scene are horizontal abstractions over the actor bindings in the track—framing and blocking are horizontal abstractions over the physical actors in the scene. One defines their position; the other captures it and puts it into frame for a certain duration.

In retrospect, I was surprised and pleased by how elegantly this example fit the idea of horizontal abstraction.

What was even more elegant was the vertical boundary from scene through shot to take.

The shot, implemented as a subsequence, provided multiple benefits. It hides the details of the take—its keyframes. More importantly, it acted as an anchor in two worlds that don't know of each other. Inside the context of the scene (the sequencer), the position of the shot was mapped in relation to the full length of the scene and to other shots. Yet, the take could be replaced without changing that relation. For the sake of continuity and reduced cognitive friction, the take only needed to know about its keyframes relative to its boundaries. This meant that even when moved, it would maintain the same information.

Abstracting the shot as a subsequence was also the key for the pipeline.

The Pipeline

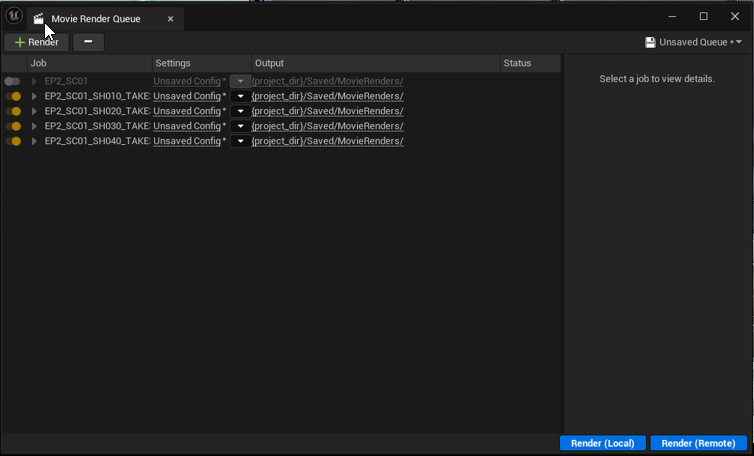

Enter the Movie Render Queue: Unreal Engine’s dedicated system for rendering sequences as videos or image sequences. More abstractly, it unified sequences as jobs with individual shots.

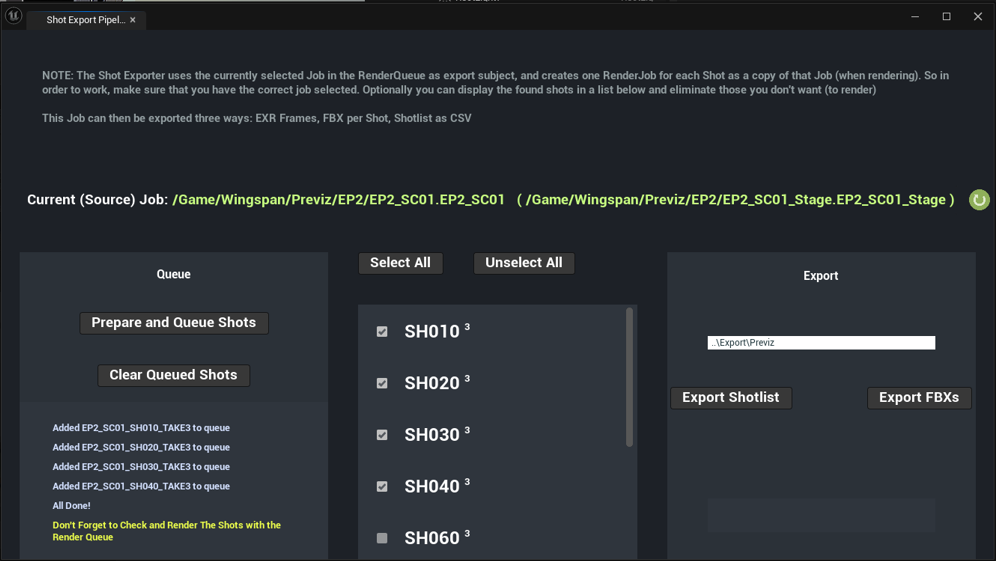

It took some work to make this a true pipeline. I implemented a shotlist exporter to CSV using Python and used the same pipeline to export FBX sequences for tracking in post-production, as well as to create an animatic for the editor to cut a rough version of the episodes. I exposed all of this through a custom GUI.

When I developed this specific solution, I realized something very important about abstraction when dealing with existing systems: abstraction can be used to avoid having to figure out everything on your own. Instead, you transform whatever you are working with to fit the abstraction that the feature you are plugging into expects, and the already implemented system takes care of the rest.

The Movie Pipeline creates a queue of jobs—the unique combination of a sequence, a level, and a configuration setting/profile. All the shots—the camera bindings on the sequencer—would be listed as sub-entries. However, they are rendered sequentially based on the main camera cut track (of which only one exists per sequence). I wanted to give the editor the most flexibility. In my abstraction of the workflow, shots represented camera angles that happen in parallel; therefore, all of them had to capture the entire length of the scene, only from different angles. This, of course, did not match our in-engine workflow, because we used the sequencer as a work-in-progress scene preview.

The solution had two components: What does the Render Queue abstraction need? What do we have? What is the difference, and how can we patch that difference?

I crafted a beautiful piece of Blueprint (unfit to be displayed on the web), so I will list the semantics from a high level of abstraction—which seems more fitting for this kind of article.

Given a job as input:

- Extract the sequence and the level.

- Display them as a preview for verification.

- List all shots and subsequences with a number for the selected take in superposition.

- The user can check which shots to include in the export.

- There are buttons to select all or deselect all.

- The user then has three options, as already mentioned:

- Export as shotlist (.csv)

- Export as FBX

- Prepare shots as separate jobs

This is the central piece—this function:

- Copies the source sequence and makes a virtual asset in the temp folder (this way, the abstraction expected by the Asset Management system is maintained to avoid any problems).

- Deletes all the other shots.

- Extends the selected shot to span the full length of the scene.

- Mutes all tricks and leaves its own Trick Track active.

I used my intuition for abstraction to forge a fake Movie Render Queue job, hijacking the existing system while at the same time ensuring it would be supported. By choosing this abstraction-first solution, I could completely skip my own implementation and, even better, skip worrying about unsupported edge cases.

And even when edge cases appeared, they were dealt with in a similar way.

Hard Mental Operations Fixed with Actor Actions

Finally, I want to address Hard Mental Operations. In my thesis, I had defined them as the steps needed to implement a goal. While technically true, this is too broad and misses the actual problem. If the GUI breaks down multiple steps, providing orientation and a point of reference, then even if more steps are required, they do not demand maintaining a mental stack.

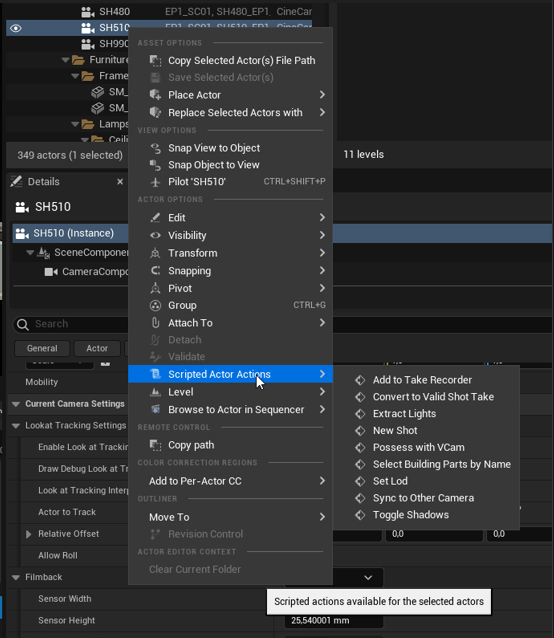

Remember: in our abstraction, a shot is a Camera in the Stage, which is an Actor in a Map. I also promised that using the existing Actor abstraction comes with many benefits. Time to cash them in. Actors can be enhanced with superpowers in the Editor using Scripted Actor Actions—my main strategy for implementing custom tools. This is the path of minimal friction. In the screenshot below, you can see several of these actions that accumulated over time.

One point of criticism: the interface for Scripted Actor Actions allows you to apply a filter on input by specifying a child actor class to be supported. However, this filter is not applied in the dropdown menu. So we have to ignore: Extract Lights, Select Building Parts by Name, Set Lod, and Toggle Shadows. These are related to working within the City, a complex geometry scene formed from districts as actors with nested buildings and facades.

Let’s briefly discuss the remaining Actions:

- Add To Take Recorder: An automation that integrates with the Take Recorder Widget from the Virtual Production plugin.

- New Shot: An automation for creating a new shot.

The Take Recorder had a completely different abstraction of Scene, Take, and Shots, which of course mismatched with ours.

The main concept of the Take Recorder was the physical Take inside a Slate, like on a real set. To maintain cohesive naming, we decided that the slate would remain one per scene (our scene). I also learned that you can select any number of actors from your level (our stage), which are then tracked while recording. These would then be written as keyframes to a sequence named SlateName_ActorName_Take. For our vertical abstraction on a shot track, we needed sequences with matching names, only with different take numbers. So, once again, the easiest solution was one of fitting, leading to multiple steps in the editor, which were automated:

Copy an existing shot to have a similar camera in name, position, and configuration.

Change its number.

Add it to the list of actors for the Take Recorder.

Deactivate all other actors.

Enable this shot.

Ensure the counter is reset based on the shot, or increase the counter for an existing shot.

Sync to other cams: A function to make one camera mimic another.

Possess with VCam: This one was a true head-scratcher. Watch closely the next few steps:

I really love Unreal Engine. It is so complex that you can find abstractions in every corner of their toolset to make their many systems work together. However, this is an example of what happens when you apply too much abstraction.

The Take Recorder can integrate with a workflow using LiveLink to connect to the mobile app "VCam," which allows you to move around a room with an iPad mounted on a dolly to simulate real camera workflows. The app connects to a VCamActor. To ensure you don’t accidentally mess with an existing camera while moving around, they made the VCamActor a wrapper around a real camera.

The only problem is that they modeled this inside an abstract Modifier Stack that can contain all sorts of custom effects to apply to the camera to simulate reality. Inside that stack, at an arbitrary index (12) of the list, you would find—nested under Unreal UI Reflection—the subgroup "default," which allows you to select which camera the VCam should "attach" to. I kid you not. What you see above is the first instructions for my operator, who had to do this every time he wanted to physically record a new shot—poor guy.

This is the exemplary case for Hard Mental Operations. I then figured out how to automate this step under the name Possess with VCam (an abstraction over the multiple highly arbitrary steps).

And finally, my favorite: Convert to Valid Shot Take. The operator and camera woman recorded hundreds of shots over a few days. At a certain point, they even grew tired of using Possess with VCam, as they were going for mostly static or very clean moving shots. The operator, under time constraints, started to directly add cameras to the level without putting them on the sequencer, and even recorded keyframes directly onto the possessable track—breaking the Shot-to-Take abstraction using the Subsequence.

He was also unaware of the inner workings of my pipeline. After a full three days of "shooting," he confessed this modified workflow to me. After a good five minutes of shocked silence, I once again solved this big discrepancy with abstraction!

Think about it: how would you solve this? Probably by writing a few new edge cases inside the exporter to account for non-animated shots and for actor tracks on the original sequencer? Any of these would introduce massive inconsistencies with the elegant fake Sequence assets I was creating until then.

Instead, I created another Scripted Action which either resolves the camera to an existing sequencer binding, extracts the frames of said sequencer binding, slaps it into a new level sequence, and creates a "fake" take out of it. The same would be done with a camera without any sequencer binding: first, it would be bound to the sequencer and then treated as one that has keyframes.

This showed that defining an abstraction and then conforming any edge case or more specific abstraction back to the main abstraction helps to streamline the workflow.

Working on these tools felt like bending, ironing out, and gluing together existing abstractions to avoid having to build new, full-blown systems as I had done in the past. At the time, it was at this exact moment of realization that I felt the need to talk about this in my master thesis—which brings me to my final section and conclusion.

Metaphors for Strategies Using Abstraction

Working with abstraction in this way felt new to me. Instead of being a single aspect, it became the main driving force to solve all my problems in strategic ways.

For me, strategies are concepts that define how and why we reach a goal, focusing on certain aspects and prioritizing resources over others to do so—again, an abstraction. I will now formulate a combined development and creative process that aims to maximize each dimension from the CDNf while utilizing and considering the defined dimensions of abstraction.

I will do so by means of three metaphors that can be applied to all levels of a project—whether on the purely conceptual side, the programming side, or by engaging the GUI.

The Lens

The lens directly embodies the idea of abstraction by providing a metaphor for focus and attention. When fully focused on the representational and logical space, we need to defocus to reach the conceptual space, where intuitive drifting becomes easier.

On the other hand, we can increase the focal length to observe only a partial reality. In this way, we may stay in the sharp world of concrete representation and/or logic, but only consider certain features. By reducing the focal length, we increase the scope and start to integrate details into a bigger picture—we move in the vertical dimension. From any possible focus length, we can move the lens horizontally to see a different aspect of an object.

This strategy maximizes Consistency and Conciseness. By decomposing and abstracting, we often find connections between systems that allow us to repurpose them. We then have components with multiple functions that, on one hand, fulfill a role as an abstraction for our personal intent, while also being an abstraction in the host system and fulfilling a role there. We can take advantage of the full feature set of the latter.

The Junction

The junction embodies the ability of abstraction to create boundaries. It also allows us to focus the traffic to a central pivot where multiple streams converge. It helps organize and structure without having to commit to every road that goes to or leaves from the junction. This metaphor becomes potent when we understand the junction as an unmovable truth—a boundary or glue. From here, we can move along any of the four dimensions: want to go deeper, higher, left, or right? Want to go more fundamental and conceptual? You have to cross the junction.

It also helps to centralize and streamline. Remember the example of the pipeline that expects everything to be subsequences. Instead of having multiple roads running through the dark movie pipeline tunnel, which may lead to collisions, we converge to a single accepted form—abstraction.

This strategy minimizes Viscosity, Error-Proneness, Hidden Dependencies, and Premature Commitment. It does so by providing boundaries where each end is loose. Things remain malleable and replaceable. This also allows us to ignore some implementation details and defer them to a future point in time. At the same time, it maximizes Depth of Control—meaning we can extend the system in any direction, vertical or horizontal, while the clear boundaries ensure that no area bleeds into another.

The Sculptor

The sculptor is the final metaphor I propose for working with abstraction. While the lens embodies abstraction literally, and the junction uses abstraction to streamline interconnected systems, the sculptor describes our role in using abstraction to create on top of existing systems for efficient results that can be evaluated as a whole at any time.

We begin with an empty compiled program, an empty scene, an empty packaged project, or an empty sheet of paper. Step by step, we carefully block in a first idea—not temporarily, as when prototyping; every dent has its purpose. It becomes a junction from which we can expand the system later on. But for now, all we are concerned with is defining the shape and following the natural surface—the existing abstraction—to keep a streamlined flow.

When introducing new elements, we first insert and then push and pull until we restore a smooth surface. We continue indefinitely, never losing sight of the big picture.

This strategy maximizes Progressive Evaluation, Consistency, and Closeness of Mapping, and arguably Visibility and Hard Mental Operations. By ensuring you always focus on output first and the whole, at any point in the development stage you have a full version of your project.

You ensure consistency across multiple parts of the program, which stay close to your vision.

By having to implement and invent less yourself, you reduce the number of components you have to keep track of, and by ironing out edge cases, you eliminate those moments that cause a lot of cognitive friction.

A Conclusion About Elegance

When working with abstraction, we enter a mode of thinking in which we can see one object from multiple perspectives. It allows us to elevate it into a space where we can carefully set boundaries that allow us to implement as much as we need for the task at hand, leaving room for extension at a later point in time.

All of this ensures that we maintain consistency and right-size our solution to the problem at hand. I would like to leave you with one final idea, which is so beautiful I have to quote directly from Technology Strategy Patterns 1:

Beauty, for Vitruvius, isn’t really in the eye of the beholder. It is about harmony

of proportion. One suggestion we can deduce from this for our current

purposes is that we must rightsize our architecture and strategy work for the

task at hand.

And I believe this is the true role of abstraction beyond programming!

Redemption

At the time, I was also working on the final systems, bug fixes, and polish of my game, which left me in a rather convoluted state of mind. I overreached with the theory, consulting over 50 sources in an attempt to find different uses of abstraction—starting with programming and extending far beyond it. I iterated on the thesis many times, introducing a wide range of topics as I tried to find connections and relate them to my work as a creative technologist. Ultimately, it became a broad investigation that, according to my evaluators, failed to make a central point.

In conclusion, abstraction is more than just a simple principle used for art or programming. It is a conscious cognitive activity that you can apply to any decision and problem solving that involves conceptualization, representation, implementation, structure, iterative work, and requires a certain degree of mental effort or creativity. When used beyond programming in the editors, the editor itself can be used to implement rules and provide tools for free. Respecting existing abstractions and already established boundaries not only allows you to understand the system better. But to inject new feature into a working and tested environment that covers most use cases for you, reducing the costs of maintenance and mental effort in the moment of implementation, and going forward.

My investigation into abstraction isn't an argument for less clarity; it’s a process of finding a solid foundation. By first loosening a definition and then committing to it, we can create a powerful structure to build and converge around. In a sense, abstraction embodies a sweet spot: vague enough to leave room for expansion, yet concrete enough to work with.

In the spirit of the thesis let me finish with one elegant abstraction that answers it all.

Abstraction doesn't just create runtime boundaries but structural ones. The Sculptor Heuristic is what you arrive at when you take that topological insight and ask "How can the System change" and for what reason.

This is Abstraction used Topologically.